SECURITIES

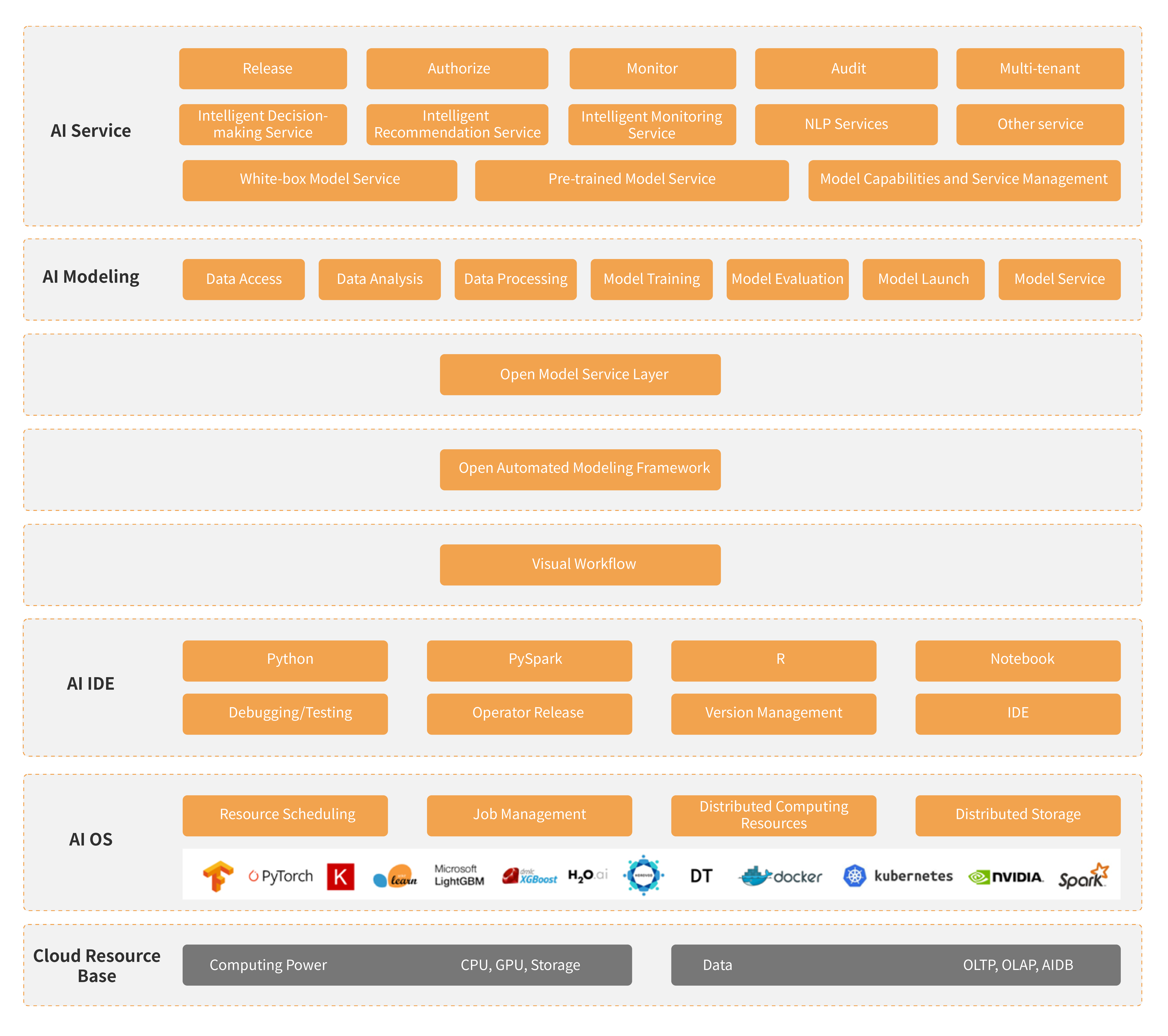

DataCanvas APS (AI Infrastructure Platform Service) independently developed by DataCanvas, adopts an open infrastructure design, which can quickly adapt to users' existing human resources and system assets, and support users to improve and enhance the platform functions according to their personalized needs.

In terms of infrastructure, APS supports various mainstream machine learning/deep learning frameworks, mainstream data storage systems, resource scheduling and container orchestration systems, CPU/GPU computing, and supports customized private development environments. In terms of model development, rich operators and source code are preset, supporting multi language development such as Python, R, PySpark, SparkSQL, etc. In terms of automatic modeling, it is equipped with various application scenarios such as image recognition, temporal prediction, NLP, and general scenarios, and supports personalized scene development. In terms of model management, achieve unified management of internal and external models, including model import, evaluation, visualization, and model comparison. In terms of model services, it supports the launch of multiple models, and can export models or SDKs to greatly improve model utilization.

-

Self service runtime environment design

Our platform has opened up runtime environment design capabilities to users, allowing them to build Docker images that include specific programming environments, programming frameworks, middleware, services, etc., to meet the continuous technology integration and upgrading needs of enterprises.

-

Scalable and reusable module library

Customized module encapsulation and release based on Docker container, with one-time programming and multiple uses, improves the development efficiency of new models, and the accumulated module library becomes an important intellectual asset for enterprises.

-

Trinity modeling approach

Integrating three modeling methods, including coding modeling for data scientists, drag and drop modeling for IT engineers, and automatic modeling for business personnel.

-

Automatic model production release

Automatically select the optimal model in the current situation, achieve automatic model publishing, provide standard REST, gRPC, MQ, and batch prediction capabilities, and integrate them into intelligent applications through SDK export.

-

Multi directional security guarantee

Support private environment deployment; Support multi-level access control for users, roles, and workspaces to ensure data security; Support traceability of behaviors such as access, editing, and operation to achieve accountability.

-

Automated operations and maintenance

Automated deployment, supporting dynamic adjustment of cluster size based on demand; Automated scheduling, supporting timed or periodic execution methods; Overall situation monitoring, timely understanding of scheduling execution status.

-

Model landing production

Model landing production -

Enterprise knowledge integration

Enterprise knowledge integration -

Team collaboration mode

Team collaboration mode

- Try AIFS:AI Foudation Software & Services

- Hotline:+86 400-805-7188

- E-mail:contact@zetyun.com

- Join Us:hr@zetyun.com